Home / Blog / Crawl Budget for Enterprise Ecommerce: What’s Changing in 2026

seo

Crawl Budget for Enterprise Ecommerce: What’s Changing in 2026

Published: February 17, 2026

Share on LinkedIn Share on Twitter Share on Facebook Click to print Click to copy url

Contents Overview

For enterprise retailers, crawl budget is the technical currency that dictates speed-to-market. At the “Home Depot scale” (1M+ SKUs and tens of millions of URL permutations), crawl efficiency isn’t just about discovery, it’s about revenue recognition. If bots waste finite fetch capacity on low-value filters, duplicate parameter states, or discontinued products instead of your newest high-margin arrivals, you don’t just lose rankings, you lose time, and time is money.

This document is a playbook, not an opinion piece: it defines the ideal state, the operating model to achieve it, the governance artifacts you need to keep it from breaking, and a measurement framework that ties crawl outcomes back to business impact.

Key Takeaways

- How do I keep Googlebot crawling the pages that actually make money (and not wasting crawl on junk URLs)? Build a Zero-Waste Architecture: separate Crawl Capacity (infra performance) from Crawl Demand (value signals), then enforce governance so only canonical, indexable, revenue-driving templates and Anchor Facets stay crawlable/indexable, while Ephemeral States (sorts, sliders, unvalidated facet combos, internal search) are neutralized via URL rules, internal linking constraints, and sitemap hygiene.

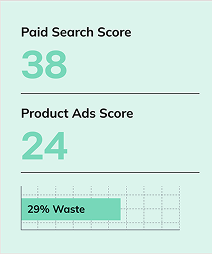

- What are the few things I should measure to know if crawl budget work is actually working? Don’t track crawl volume, track outcomes: Crawl-to-Index Alignment on priority templates, Recrawl Latency for launches/high-margin URLs, and Crawl Share by Template (plus a simple Wasted Crawl Rate classification from logs) to confirm bots are spending fetches on the right templates and that indexing/refresh speed improves.

- Why does Generative Engine Optimization (GEO) need to be its own program now? Because each LLM ecosystem has its own crawler and retrieval behavior, which creates a separate visibility and risk plane: you need a bot taxonomy + policy matrix (allow/block/rate/cache by bot class) and GEO execution (fact-dense, chunked, structured content) so retrieval bots can represent you accurately, while training bots and spoofed agents don’t create unwanted exposure or infrastructure drag.

The Hidden Cost of Wasted Crawl Budget

At enterprise scale, wasted crawl budget is not a technical nuisance, it’s an infrastructure and revenue liability. Once sites pass roughly one million unique URLs, bots can miss meaningful portions of inventory on unoptimized platforms. That’s not a ranking problem. It’s a discoverability constraint.

Crawl waste tends to show up in four primary cost centers:

1) Revenue Latency

When bots crawl discontinued products, thin facet combinations, and parameter junk, they aren’t crawling new launches, seasonal inventory, or high-margin SKUs. This creates recrawl latency, the delay between publication and search visibility.

| Symptom | What It Usually Means | How to Confirm | Fix |

| New products take days/weeks to appear | Crawl share is trapped in low-value URL states | Logs show heavy crawling of params/facets/sorts vs new PDP/PLP URLs | Neutralize junk states + prioritize launch sitemaps |

| Large crawl volume, low index outcomes | Crawl-to-index alignment is poor | Sample crawled URLs → classify indexable/canonical; check index status sampling | Canonical consolidation + block/redirect junk + strengthen anchor uniqueness |

| Seasonal peaks underperform | Priority pages not refreshed fast enough | Recrawl latency spikes in peak windows | Segment sitemaps + internal linking boosts to seasonal anchors |

2) Infrastructure Overhead

Crawl capacity is influenced by server responsiveness. When response times slow or errors rise, bots throttle to protect infrastructure. Wasted crawl doesn’t just reduce efficiency, it suppresses future crawl allocation.

| Infra Signal | Bot Outcome | What to Track | What to Do |

| Rising TTFB / latency spikes | Lower fetch rate | Bot TTFB p50/p95 by template | Caching, reduce expensive render paths, stabilize origin |

| Increased 5xx/timeouts | Throttling + missed discovery | 5xx rate by template/route | SLO-based release gates, rollback plan, load isolation |

| Bot concurrency surges | Origin strain without business value | Bot traffic share + bandwidth by bot class | Rate-limit low-value bots, cache bot responses, reduce crawlable junk |

3) Index Dilution

Crawl waste often correlates with index bloat, low-value or duplicative URLs retained in the index. More indexed pages do not equal more visibility. Thin redundancy weakens demand signals, which reduces recrawl frequency on priority templates.

| Index Dilution Pattern | Why It Hurts | How to Diagnose | Fix |

| Many near-duplicate facets indexed | Bots perceive redundancy | Sample indexed facet states + SERP overlap | Anchor-only facet policy + canonical consolidation |

| Soft-404/redirect URLs in index | Crawl and trust waste | Coverage sampling + logs of soft-404 patterns | Clean sitemaps, correct status codes, redirect hygiene |

| Discontinued inventory dominates | Demand shifts away from fresh products | Segment index sample by inventory status | Product lifecycle rules: keep/redirect/noindex/410 |

4) AI Exposure Risk

AI crawler traffic is rising quickly. Unlike traditional search bots, some AI agents ingest content for training/synthesis without guaranteed attribution. Every unnecessary crawl event becomes a strategic tradeoff: infra cost, data exposure, or foregone discoverability elsewhere.

| Risk | What It Means | Governance Response |

| Training ingestion without attribution | Content absorbed into model weights | Decide what is trainable; restrict sensitive areas |

| Retrieval visibility impacts discovery | AI answers may replace SERP clicks | Allow retrieval to the content you want represented |

| Spoofing/scraping | AI UAs can be impersonated | Validate identity, detect anomalies, block unknowns |

How Google Allocates Crawl Budget at Enterprise Scale

Google explains crawl budget as a balance between how much your site can handle and how much Google actually wants to crawl based on indexing needs. In their words, “Taking crawl rate and crawl demand together we define crawl budget as the number of URLs Googlebot can and wants to crawl.” When enterprise sites generate massive volumes of low-value URL variants (like faceted navigation and session identifiers), that balance breaks, and important pages can take longer to be discovered and refreshed in the index.

Forces that govern crawl allocation

| Force | What It Is | What It Affects | Typical Enterprise Failure | Fix |

| Crawl Capacity | Safe fetch rate | How many URLs can be crawled | Throttling during peak cycles | SLOs + caching + stability |

| Crawl Demand | Priority signals | Which URLs are crawled more | Demand diluted across junk states | Anchor-only indexing + consolidation |

| URL uniqueness | Differentiated value | Indexation likelihood | Duplicate facets get crawled but not indexed | Facet governance + content requirements |

| Internal linking | Discovery paths | Crawl depth and priority | Facet traps dominate crawl paths | Link only to anchors; hide ephemeral states |

| Freshness signals | Change relevance | Recrawl frequency | Lastmod spam or stale timestamps | Controlled lastmod rules + clean segmentation |

Faceted Navigation & Parameter Governance

Enterprise crawl governance requires a binary classification of navigation logic:

- Anchor Facets: search-validated, intentionally indexable facet states

- Ephemeral States: infinite/low-value states that must be neutralized (sorts, view toggles, sliders, unvalidated combos)

Intent-based triage: prevent faceted navigation from becoming a crawl trap

| Navigation Element | Usually Should Be… | Why | Control Mechanism |

| High-demand facets (brand, core attributes) | Anchor | Captures real search demand | Crawlable links + canonical + indexable |

| Mid-demand facets (conditional) | Conditional anchor | Demand varies by category | Allowed only where validated |

| Sort, view, internal search parameters | Ephemeral | No index value; infinite states | No crawlable links; JS-only; block/noindex |

| Multi-facet long-tail combos | Ephemeral by default | Fragment demand | Canonical to nearest anchor; exclude from sitemaps |

The Facet Governance Matrix

| Facet / Combo | Intent & Demand Evidence | Classification | URL Rule | Content Requirement | Internal Linking Rule | Sitemap Rule |

| Brand | High demand | Anchor | Index + self-canonical | Unique intro + merchandising logic | Crawlable nav allowed | Include |

| Color (category-specific) | Medium demand | Conditional anchor | Index only on validated categories | Differentiators + filters explanation | Linked only where validated | Include if anchor |

| Price slider ranges | None / infinite | Ephemeral | Neutralize (block/noindex/canonical) | None | JS-only interaction | Exclude |

| Sort by price/newest | None | Ephemeral | Non-indexable | None | Never crawlable | Exclude |

| 3+ facet combos | Sparse demand | Ephemeral default | Canonical consolidate | None | Don’t expose | Exclude |

Advanced Discovery: Sitemap Indexing & Segmentation

At scale, you don’t “have a sitemap.” You operate a sitemap fleet managed by a sitemap index file. This gives you control and diagnosis: you can pinpoint where indexation is lagging and where crawl is being wasted.

Sitemap segmentation by business value

| Sitemap Segment | Purpose | Inclusion Rule | What You Monitor |

| High-margin products | Maximize revenue coverage | Canonical + 200 + indexable only | Index lag + recrawl latency |

| New launches | Speed-to-market | Newly published + clean | Time-to-crawl/time-to-index |

| Clearance/price-sensitive | Rapid refresh | Inventory/price change pages | Refresh frequency + coverage |

| Category anchors | Consolidate demand | Anchor-only | Visibility + crawl alignment |

| Editorial/help content | Demand capture | Canonical + evergreen | Crawl share vs conversion influence |

Sitemap hygiene (non-negotiables)

| Rule | Why It Matters | How to Validate |

| Only canonical, indexable, 200 URLs | Prevents burning crawl on junk | Pre-submit validation + periodic audits |

| Exclude redirects and soft-404 | Avoid wasting fetches | Sample URLs and verify status/canonical |

| Use <lastmod> with discipline | Signals recrawl priorities | Compare lastmod to real changes (stock/price/content) |

| Keep sitemaps aligned to governance | Prevent re-inflation | Spot-check: if it’s not allowed to index, it can’t be in a sitemap |

Log-File Analysis: Traditional Crawlers vs. AI Agents

Log data shows what bots actually do: which templates they hit, which states they waste time on, and where throttling begins. At enterprise scale, it’s the operational truth source.

Functional bot taxonomy (you can’t govern what you don’t classify)

A functional bot taxonomy is the starting point for bot governance: you can’t control crawl cost, visibility, or exposure risk if you treat every crawler as “just a bot.” Enterprise ecommerce teams should classify bots by what they’re trying to do, index for search, retrieve content for real-time AI answers, ingest content for training, or scrape without authorization, because each class has a different value exchange and a different risk profile. Once bots are categorized by function, you can apply consistent policies (allow, restrict, rate-limit, cache, or block) that protect revenue-critical crawl paths while enabling the specific forms of visibility you actually want.

| Bot Class | What They Do | Why It Matters | Default Policy |

| Search crawlers | Discover/index for search | Core revenue channel | Allow; keep paths clean |

| Retrieval crawlers | Fetch in real time for RAG answers | Drives AI visibility | Allow to approved content |

| Training crawlers | Ingest content to update weights | Data risk + asymmetric value | Restrict sensitive areas; consider conditional access |

| Unknown/spoofed scrapers | Harvest content | Pure risk | Block and monitor |

Bot Policy Matrix

A bot policy matrix is the enforcement layer that turns “we should manage bots” into an operational control system. Instead of treating all crawlers the same, enterprise ecommerce teams classify bots by intent (search indexing, AI retrieval, AI training, unknown/spoofed) and apply consistent rules for access, throttling, and caching. The goal is to protect crawl capacity for revenue templates, enable visibility where it matters (e.g., AI answer surfaces), and reduce infrastructure and exposure risk by restricting or blocking non-essential and untrusted traffic.

| Bot Class | Allow/Block | Rate Limit | Cache Strategy | Allowed Areas | Notes |

| Search crawlers | Allow | Low | Standard | Canonical revenue templates | Optimize for discovery |

| Retrieval crawlers | Allow | Medium | Aggressive | Approved PDP/PLP/help/brand | Supports “AI answers” surfaces |

| Training crawlers | Conditional | Higher | Cache + restrict | Non-sensitive areas only | Protect competitive intelligence |

| Unknown/spoofed | Block | N/A | N/A | None | Validate identity and behavior |

Verification asymmetry

Verification asymmetry is the reality that “bot identification” isn’t equally reliable across crawler types, especially as AI and scraper traffic grows. Some bots provide strong verification signals, while others can be easily impersonated or lack consistent identity methods, which increases the risk of infrastructure strain and unauthorized scraping.

This table outlines the most common verification gaps enterprise ecommerce teams face and the practical controls (validation, allowlists, monitoring, and traffic management) that reduce spoofing risk without accidentally blocking legitimate crawlers.

| Challenge | Risk | Mitigation |

| UA spoofing | Fake “good bots” scrape at scale | Identity validation + behavioral anomaly detection |

| Limited reverse DNS support | Harder verification | Maintain allowlists from provider guidance; monitor drift |

| Traffic spikes | Origin strain | WAF rules + throttling + caching policies |

Generative Engine Optimization (GEO): The AI-First Shift

GEO should be treated as its own discipline, not a subsection of SEO. The reason is structural: LLM ecosystems operate with their own crawler behaviors and retrieval systems, and those systems influence whether your brand is selected, quoted, or referenced in synthesized answers.

Where SEO focuses on ranking for keywords, GEO focuses on being selected as a trusted source for AI-mediated responses, often without the user ever visiting a SERP.

Why GEO matters now (especially for enterprise retail)

| Reality | What It Changes | What You Must Do |

| Each LLM ecosystem has crawler behavior | “Google-only crawl policy” is incomplete | Maintain bot taxonomy + policy by class and intent |

| Retrieval answers shape demand | Users may convert without clicking SERPs | Build retrieval-friendly content and approved access paths |

| Training ingestion has risk | Competitive info can be absorbed without attribution | Decide what is trainable vs restricted; publish “safe-to-ingest” knowledge intentionally |

| AI visibility is a separate scoreboard | Ranking ≠ selection | Measure “Share of Model” signals and AI-sourced demand |

The GEO execution framework: Semantic Footprint

| Pillar | What It Means | What to Implement | How to Validate |

| Fact Density | Unique, verifiable information that models can reuse | Add constraints, numbers, comparisons, edge cases in HTML | Better extraction quality + stronger citations |

| Content Chunking | Each section answers one question cleanly | Clear headings, tight paragraphs, Q&A blocks | Improved retrieval relevance |

| Structured Data | Machine-readable “cheat sheet” | Product, FAQPage, Organization (plus relevant extensions) | More consistent extraction |

| Trust Signals | Provenance and credibility | Editorial standards, author/reviewer, references | Increased selection likelihood over time |

GEO governance: what bots should be allowed to see

| Content Area | Retrieval Crawlers | Training Crawlers | Why |

| PDP core facts/specs | Allow | Conditional | Visibility vs competitive exposure |

| Buying guides/category education | Allow | Often allow | High brand value, low sensitivity |

| Proprietary pricing logic/internal ops | Block | Block | Pure competitive risk |

| Support docs/policies | Allow | Often allow | Ensures accurate AI answers |

Engineering Operations: The SEO Error Budget

Borrowing from SRE, the SEO Error Budget reframes crawl health and performance as quantifiable reliability. This prevents “silent regressions” that slowly erode crawl capacity and indexation outcomes.

The formula

Error Budget = 100% − SLO

Example SLO: “99.9% of category page requests must return 200 OK within 500ms.”

Operational policy (what teams do when budgets change)

| Budget State | Remaining Budget | Allowed Activity | Required Actions |

| Healthy | >75% | High-velocity deployments | Standard checks |

| Amber | 25–75% | Cautious changes | Pre-deploy SEO audits; performance verification |

| Exhausted | <25% | Feature freeze | Fix latency/errors first; rollback high-risk releases |

ROI & Measurement: Connecting Crawl to Revenue

To measure crawl efficiency, stop reporting “pages crawled.” Report Revenue Impact of Discoverability. Crawl allocation determines how quickly revenue assets enter the index, how frequently they refresh, and how reliably they remain visible during demand peaks.

What to track

| Business Question | Crawl Metric | Data Source | Attribution Approach |

| Are launches monetizing faster? | Recrawl latency on launch URLs | Logs + publish timestamps | Pre/post comparison with seasonality controls |

| Are we wasting crawl? | Wasted crawl rate | Logs classification | Tie waste reduction to increased crawl share on priority templates |

| Is index health improving where it matters? | Crawl-to-index alignment (priority templates) | Logs + index sampling | Track alignment by template + revenue tier |

| Does this impact revenue? | Organic sessions/conversions by segment | Analytics + GSC | Controlled category bucket testing |

The 6 Most Important Crawl Budget Practices for Enterprise Ecommerce Retailers

Here are the top 6 things that you should be thinking about when it comes to crawl budget:

1) Build a Crawl Governance System (URL Taxonomy + Facet Governance + Change Control)

At enterprise scale, crawl budget problems usually aren’t “Google doesn’t crawl us.” They’re “we allowed too many URL states to exist.” The single biggest unlock is treating crawl like a governed system, not a byproduct of your platform.

That starts with a URL state taxonomy that classifies every major URL type your site can generate (PDPs by lifecycle, category/PLP types, faceted states, pagination, internal search, tracking params, session params). Once states are classified, you formalize what’s allowed to be crawlable/indexable via a Facet Governance Matrix that separates Anchor Facets (validated, demand-driven, intentionally indexable) from Ephemeral States (sorts, sliders, view toggles, unvalidated facet combos, internal search results).

Most enterprise retailers regress because new filters, merch rules, or platform updates quietly reintroduce crawl traps. That’s why governance must include change control: new indexable states require approval, documentation, and monitoring. When governance exists, crawl is no longer “managed,” it’s controlled.

| Governance Component | What It Does | What “Good” Looks Like |

| URL State Taxonomy | Classifies every URL type and its default rules | Every URL fits a category with known crawl/index policy |

| Facet Governance Matrix | Defines which facets/combos can be anchors | Only validated facets are crawlable/indexable |

| Change Control | Prevents reinflation from product/dev changes | New states require sign-off + post-launch monitoring |

2) Eliminate Crawl Traps at the Source (Facets, Parameters, Internal Search, Infinite States)

At scale, crawl waste is rarely caused by content volume—it’s caused by state explosion. Faceted navigation and parameters can generate millions of permutations that bots will happily explore if you give them crawlable paths. If those permutations are thin or duplicative, you get the worst of both worlds: heavy crawling and low index outcomes.

The authoritative approach is simple: make it impossible for bots to waste crawl. That means:

- Ephemeral states should not be discoverable through crawlable internal links.

- Long-tail facet permutations should canonicalize to the nearest demand-backed anchor state.

- Internal search results should be treated as functional pages, not indexable assets.

- Tracking/session parameters should never create crawlable or indexable surfaces.

This isn’t “technical cleanup.” It’s a demand consolidation strategy: you’re concentrating signals on a smaller set of URLs that deserve to be recrawled frequently and indexed reliably.

| Common Crawl Trap | Why It Burns Crawl | Enterprise Fix Pattern |

| Sort/view parameters | Infinite states, no search demand | Remove crawlable links + keep states non-indexable |

| Price sliders/ranges | Near-infinite permutations | JS-only interaction + canonical consolidation |

| Multi-facet long-tail combos | Fragmented demand, duplicate content | Anchor-only indexing + canonical to anchor |

| Internal site search URLs | Thin/volatile, unlimited queries | Noindex + block discovery paths; keep out of sitemaps |

| Tracking/session params | Duplicate URLs at scale | Parameter handling rules + canonicalization |

3) Operate “Sitemaps as a Fleet” (Segmented by Business Value + Strict Hygiene)

Enterprise retailers should not run “a sitemap.” They should run a sitemap fleet: multiple segmented sitemaps managed through a sitemap index. This gives you a control plane for discovery and a diagnostic surface for indexation issues.

Segmentation matters because bots respond to prioritization signals when your architecture is clean. A fleet approach lets you isolate and accelerate discovery for:

- New launches (time-to-market)

- High-margin SKUs (revenue coverage)

- Core category anchors (demand consolidation)

- Fast-changing inventory (price/stock sensitivity)

But segmentation is only valuable if hygiene is strict. Sitemaps must only contain canonical, 200-status, indexable URLs. Redirects, soft-404s, non-canonicals, and blocked URLs turn sitemaps into a crawl-waste amplifier. Your sitemap system should behave like a curated feed of what you want bots to crawl, not a dump of everything that exists.

| Sitemap Segment | Purpose | Inclusion Rules | KPI to Watch |

| Launch / New Inventory | Speed-to-market | Newly published + canonical + indexable | Recrawl latency / time-to-index |

| High-margin / Priority PDPs | Revenue capture | Canonical + 200 + indexable only | Coverage + crawl-to-index alignment |

| Category Anchor PLPs | Consolidate demand | Anchor-only states | Visibility + crawl share |

| Volatile Inventory | Keep search fresh | Frequently changing items | Refresh frequency |

4) Reduce the Rendering Tax on Revenue Templates (Outcome-Driven Rendering Strategy)

Rendering is a crawl budget lever because it changes the “cost per fetch.” When your most important templates are expensive to render, you create friction that can slow discovery and processing, especially at scale where bots are already making triage decisions.

The enterprise approach isn’t ideological (“SSR good, CSR bad”). It’s outcome-driven:

- If bots need JavaScript to see critical content and links, you’re likely paying a discoverability tax.

- If your PLPs and anchor facets drive revenue, they should be reliably digestible without fragile client-side dependency chains.

- If origin performance becomes the bottleneck, bots throttle and your crawl capacity collapses.

A realistic strategy is often selective: SSR/ISR (or other pre-rendering approaches) for anchor templates, while keeping ephemeral states interactive and non-crawlable. The point is to protect the templates where crawl speed actually translates into revenue.

| Situation | What Goes Wrong | Recommended Pattern | How You Know It Worked |

| JS hides links/content | Delayed discovery/indexation | SSR/ISR for anchors | Recrawl latency decreases |

| Heavy PLP rendering | High cost per fetch | Pre-render revenue templates | Crawl share shifts to money templates |

| Origin strain from bots | Capacity suppression | Edge caching + route optimization | Bot TTFB stabilizes; fewer throttling signals |

5) Measure Crawl Outcomes with Log-Based KPIs (Alignment > Volume)

Enterprise crawl programs fail when teams measure the wrong thing. “Pages crawled per day” is not a success metric. Crawl volume can increase while your indexable coverage and revenue visibility get worse.

You need log-based KPIs that measure whether crawl is allocated to pages that can and should be indexed, and whether priority URLs refresh fast enough to capture demand. The four metrics that consistently separate mature crawl programs from immature ones are:

- Crawl-to-Index Alignment (priority templates)

- Recrawl Latency (revenue and launch URLs)

- Crawl Share by Template (where crawl is spent)

- Wasted Crawl Rate (crawl spent on non-canonical/noindex/redirect/soft-404)

When these are tracked by template class (PDP, PLP, anchor facet, pagination, parameter states), you can tie architectural and governance changes directly to crawl behavior and index outcomes.

| KPI | Definition | How to Calculate (Practical) | Why It Matters |

| Crawl-to-Index Alignment | Crawled URLs that are canonical + indexable | From logs: classify crawled URLs; validate index via sampling | Proves crawl is hitting the right surfaces |

| Recrawl Latency | Publish/update → bot fetch | Compare timestamps (CMS) to first log hit | Measures speed-to-market |

| Crawl Share by Template | Crawl distribution by page type | Group logs by template/path/pattern | Shows if crawl is stuck in traps |

| Wasted Crawl Rate | Crawl spent on junk | % hits that are redirects/noindex/non-canonical/soft-404 | Quantifies waste and progress |

6) Operate Crawl Health Like Reliability Engineering (SEO Error Budget + Bot Governance, Including AI Crawlers)

At enterprise scale, crawl is fragile because small regressions compound quickly. A minor increase in 5xx, a templating issue that creates redirect loops, or a nav change that reintroduces crawlable parameter paths can shift millions of fetches overnight.

That’s why mature programs treat crawl health as reliability engineering: define SLOs for critical templates, monitor performance/error budgets, and implement release gates when reliability deteriorates.

And in 2026, bot governance must include AI crawlers. Each LLM ecosystem introduces its own crawler behaviors. Some are retrieval-oriented (visibility opportunity), some are training-oriented (exposure risk), and many can be spoofed (security risk). Enterprise retailers should maintain a bot taxonomy and enforce a policy matrix: allow/block, rate-limit, and cache by bot class, so bot traffic doesn’t suppress crawl capacity or create unwanted exposure.

| Reliability / Governance Layer | What You Define | What You Enforce | Outcome |

| SEO SLOs | Latency + success rate by template | Release gates + rollback | Stable crawl capacity |

| SEO Error Budget | Allowable failure/latency drift | Freeze high-risk changes when burned | Prevents slow-motion crawl collapse |

| Bot Policy Matrix (incl. AI) | Which bots get access to what | Allow/block/rate/cache + verification | Protect infra + control exposure |

Competitive Differentiation: Go Fish Digital Strategy

While most agencies focus on keyword volume, our Enterprise SEO Services focus on Indexation Control. We specialize in:

- Crawl depth audits to ensure products are reachable within 3 clicks.

- Pagination optimization to ensure bots reach deep-catalog SKUs.

- GEO & SEO modeling to protect your traditional organic revenue while capturing the “Share of Model” in AI search.

About Noah Atwood

MORE TO EXPLORE

Related Insights

More advice and inspiration from our blog

3 GEO Tools to Better Understand & Improve AI Search Performance

Teams have more data than ever, but less confidence in what...

PDP Template Engineering for Generative Engine Optimization (GEO)

For big-box retailers, the Product Detail Page is no longer just...

Noah Atwood| March 26, 2026

Creating Retrieval-Ready Content for eCommerce Generative Engine Optimization (GEO)

For more than two decades, eCommerce discovery has been dominated by...

Noah Atwood| March 25, 2026