Home / Blog / Google Mum Update

Google Mum Update

Published: December 17, 2021

Share on LinkedIn Share on Twitter Share on Facebook Click to print Click to copy url

Contents Overview

More About Google Mum

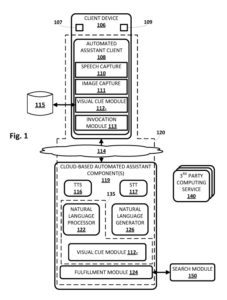

Google was granted a patent on using Google MUM to answer questions from an automated assistant better. This patent provides details on how Google Mum Works, but Google has published at least on paper that details the process more, which is worth a look. See MUM: A new AI milestone for understanding information by Pandu Nayak. Pandu is a Google Fellow and Vice President, Search, but he is not one of the people whose name is on the patent.

What Does the Google MUM Patent Say?

Google MUM can tell you if your hiking Boots can be used to climb Mount Fuji if you send Google a photo of your boots. It’s that interaction between an automated assistant and humans that Google MUM has patented.

Related Content:

- Technical SEO Agency

- Ecommerce SEO Agency

- Shopify SEO Services

- Franchise SEO Agency

- Enterprise SEO Services

Here’s a slice from the patent which explains the problem that Google MUM addresses:

Humans may engage in human-to-computer dialogs with interactive software applications referred to herein as “automated assistants” (also referred to as “chatbots,” “interactive personal assistants,” “intelligent personal assistants,” “personal voice assistants,” “conversational agents,” etc.). For example, humans (which when they interact with automated assistants may get referred to as “users”) may provide commands, queries, requests (referred to herein as “queries”) using free form natural language input which may include vocal utterances converted into text and then processed typed free form natural language input. Ofter, the automated assistant must first be “invoked,” e.g., using predefined oral invocation phrases.

Many computing services (also referred to as “software agents” or “agents”) exist that are capable of interacting with automated assistants. These computing services are often developed provided by what will get referred to herein as “third parties” (or “third-party developers”) because the entity providing a computing service is often not directly affiliated with an entity that provides the automated assistant.

But, computing services are not limited to those developed by third parties, and may get implemented by the same entity that implements the automated assistant. Computing services may get configured to resolve a variety of different user intents, many of which might not be resolvable by automated assistants. Such intents may relate to, but are of course not limited to, controlling or configuring smart devices, receiving step-by-step instructions for performing tasks and interacting with online services. Accordingly, many automated assistants may interact with both users and third-party computing services simultaneously, effectively acting as a mediator or intermediary between the users and the third party.

Some third-party computing services may operate in accordance with dialog state machines that effectively define a plurality of states, as well as transitions between those states, that occur based on various inputs received from the user elsewhere (e.g., sensors, web services, etc.). As a user provides (through an automated assistant as mediator) free form natural language input (vocally or typed) during dialog “turns” with a third party computing service, a dialog state machine associated with the third party computing service advances between various dialog states. Eventually, the dialog state machine may reach a state at which the user’s intent gets resolved.

This is the first source of information that I have seen which addresses the interaction between humans and the search engine in detail when talking about Google MUM. I have written a few other posts about the Google Automated assistant here, which are worth a look:

- November 27. 2019 – Google Automated Assistant Search Results

- December 13, 2019 – The Google Assistant and Context-Based Natural Language Processing

- March 20, 2020 – How an Automated Assistant May Respond to Queries from Children

- August 5, 2021 – A Note-Keeping Application From Google

Summary of the Google MUM and Automated Assistant Patent

The paper I linked to above about Google MUM provides some examples, including display results about then question and answer interactions between human beings and the automated assistant. Often this interaction between human and automated assistants takes place using a phone or a speaker device (It could take place using a smartwatch as well.)

Rather than break down this patent into more detail, I will link to it, and to some of the other documents that Google has produced about Google MUM, just in case you have any more questions about it. There is a video at youtube from Google that is worth watching that tells us more about the Multitask Unified Model behind Google Mum.

Give it a watch as a way to understand it better, and if you would like, read through the patent to get a better sense of how it works with the Google automated assistant.

The Video can get watched here:

Google MUM MultiTask Unified Model Introduction

The patent behind Google MUM which explains how it works with Google’s Automated Assistant is located here:

Multi-modal interaction between users, automated assistants, and other computing services

Inventors: Ulas Kirazci, Adam Coimbra, Abraham Lee, Wei Dong, Thushan Amarasiriwardena, Yudong Sun, and Xiao Gao

Assignee: GOOGLE LLC

US Patent: 11,200,893

Granted: December 14, 2021

Filed: February 6, 2019

Abstract

Techniques get described herein for multi-modal interaction between users, automated assistants, and other computing services. In various implementations, a user may engage with the automated assistant in order to further engage with a third party computing service. The user may advance through dialog state machines associated with third-party computing services using both verbal input modalities and input modalities other than verbal modalities, such as visual/tactile modalities.

Conclusion About Google Mum

I have included a link to a Google video about the MultiTask Unified Model behind Google MUM and a Google AI Blog Post about the Question and Answering approach behind Google MUM, and this patent provides details about how the Automated Assistant works with human beings to enable better interactions between it and human beings.

Google MUM is supposedly more powerful than programs such as BERT from Google because it covers a wider range of information about the topics that it covers, including visual aspects. As the abstract from the patent says, it also covers visual/tactile modalities

About Bill Slawski

MORE TO EXPLORE

Related Insights

More advice and inspiration from our blog

3 GEO Tools to Better Understand & Improve AI Search Performance

Teams have more data than ever, but less confidence in what...

PDP Template Engineering for Generative Engine Optimization (GEO)

For big-box retailers, the Product Detail Page is no longer just...

Noah Atwood| March 26, 2026

Creating Retrieval-Ready Content for eCommerce Generative Engine Optimization (GEO)

For more than two decades, eCommerce discovery has been dominated by...

Noah Atwood| March 25, 2026