Contents Overview

SEO Log Analysis: How Search Engines Actually Crawl Your Site

Most teams feel like they have the data they need.

They can see traffic. Rankings. Impressions. Maybe even page-level performance.

On the surface, it looks complete.

But it’s not.

Because all of that data shows outcomes.

It doesn’t show how those outcomes happened.

What you can’t see (and why it matters)

You can see which pages are getting traffic.

What you can’t see is how search engines actually got there.

Which pages they crawl. How often they come back. Where they spend time. What they skip entirely. In fact, 51% of website traffic is bots traffic.

That’s the gap. And it’s a big one. Because without that visibility, you’re working backwards.

Trying to explain results without seeing the behavior behind them.

Where SEO visibility actually breaks down

Most SEO issues don’t show up clearly in dashboards.

They show up indirectly.

A page isn’t performing. Rankings fluctuate. Traffic doesn’t scale the way you expect.

So you start looking for causes.

Content. Links. Technical issues. And sometimes you find them. But often, the root issue is simpler.

Search engines aren’t interacting with your site the way you think they are.

Important pages might not be crawled frequently. Others might be over-prioritized. Entire sections might be ignored.

And none of that shows up clearly without looking at crawl behavior.

What SEO log analysis actually is (and how it works)

SEO log analysis uses server log data to show how search engines and AI systems actually crawl and interact with your site.

Every time a bot visits your site, it leaves a record.

Log data captures that behavior.

That includes:

- which pages are crawled

- how often they’re accessed

- which bots are visiting

- how crawl patterns change over time

Instead of inferring what’s happening, you can see it directly.

What log data actually reveals

This is where it becomes useful. Log data doesn’t just show activity, it shows patterns.

You can start to see:

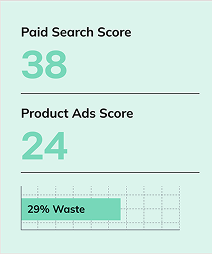

- which pages search engines prioritize

- where crawl budget is being spent

- which pages are rarely or never visited

- how new or updated content is being discovered

Those patterns explain a lot. Why some pages scale. Why others stall. Why certain updates don’t have the impact you expected.

Why this matters now, and what it looks like in practice

Crawling has always mattered. But it matters more now. Because search engines and AI systems rely on similar retrieval patterns.

If your content isn’t being accessed, it’s less likely to be evaluated. And if it’s not evaluated, it’s not going to show up, no matter how well it’s written. That’s why understanding crawl behavior is foundational. Not just for technical SEO, but for visibility overall.

You can see this most clearly in complex environments.

In our Bandwidth case study, improving how search engines interacted with key pages helped focus effort where it actually mattered.

Instead of optimizing everything, the team could prioritize pages that were being crawled and had the highest impact potential.

That’s the shift. You stop treating all pages equally. You start working based on how search engines actually interact with your site.

How this fits into a broader system

Log analysis doesn’t replace other SEO work.

It makes it more precise.

- Page Optimization helps improve pages that matter most

- Content Similarity ensures those pages align with intent

- Page Monitoring tracks what changes and what happens next

You can explore how these connect across the Barracuda Modules.

That’s where things become more predictable. You’re not guessing where to focus. You’re following real signals.

Where to start

Start simple. Look at what search engines are actually doing.

Not what you assume they’re doing.

Find:

- pages that are heavily crawled

- pages that are ignored

- patterns that don’t match your expectations

That’s where the opportunity is.

Key takeaways

- Performance data shows outcomes, not the behavior behind them.

- Crawl activity determines what content gets seen and prioritized.

- Log analysis helps teams focus on what actually drives visibility.

Frequently asked questions about SEO log analysis

What is SEO log analysis?

It’s the process of using server log data to understand how search engines crawl and interact with your site.

Why is crawl data important for SEO?

Because search engines can only rank what they access. Crawl behavior determines visibility.

What can log analysis reveal?

It shows which pages are prioritized, ignored, or crawled inefficiently.

Does this impact AI search results?

Yes. AI systems rely on similar retrieval patterns, so crawl behavior affects inclusion.

Where should I start with log analysis?

Start by identifying crawl patterns and comparing them to your priorities.

Log Analysis is part of Barracuda.

Reach Out to See How Search Engines Actually Interact With Your Site

Most teams only see the outcome. Rankings, traffic, and impressions don’t explain how search engines are actually accessing and evaluating your content.

Barracuda Log Analysis helps teams uncover crawl behavior, identify what’s being prioritized or ignored, and make smarter optimization decisions based on real search engine activity.

About Kimberly Anderson-Mutch

MORE TO EXPLORE

Related Insights

More advice and inspiration from our blog

How to Scale Creative Production for Better CTR and ROAS

Creative performance depends on variation. Learn how to scale image and...

Kimberly Anderson-Mutch| May 15, 2026

How Search Results Shape Brand Reputation (And How to Manage It)

Search results shape brand perception before anyone visits your site. Learn...

Kimberly Anderson-Mutch| May 15, 2026

How to Build SEO Content Outlines That Actually Rank

Most content fails before it’s written. Learn how to build SEO-focused...

Kimberly Anderson-Mutch| May 15, 2026