Table of Contents

Problems with Video Search Results

An area causing problems for searchers is when they may be looking for a video in response to a query. A Google patent recently granted describes how Google may be responding to queries that surface video search results. The patent tells us that the purpose behind it is “identifying videos or their parts that are relevant to search terms.”

The algorithm behind the patent attempts to solve a problem that is described in detail in the description of the patent.

Related Content:

What it tells us is that people using “media hosting websites” will usually browse or search that hosted media content, such as videos by trying to use keywords or search terms in queries to find “textual metadata describing the media content.” What is meant by “textual metadata” can include:

- Titles of media files

- Descriptive summaries of the media content

The patent explains why this may be a problem. It tells us that such textual metadata can often not be representative of the entire content of the video, especially if a video is very long and has a variety of scenes.

Usually, a description that accompanies a video is fairly short and does not describe all of the scenes in a video. What this may mean is that a video that may be what a searcher is looking for may not be returned in response to a search on keywords that might describe such scenes. As the patent tells us:

Thus, conventional search engines often fail to return the media content most relevant to the user’s search

Another problem with most media hosting websites happens because of a large amount of hosted media content, a search query may return hundreds or even thousands of videos responsive to the user query.

This could mean that a user may have a problem deciding which of the video search results are most relevant.

To make it easier for someone to decide upon which video might be most relevant, a website may present those search results with thumbnail images

Often thumbnail images for videos are a predetermined frame from the video file (possibly the first frame, center frame, or last frame).

This can be a problem because thumbnails selected in this way are often not representative of the content of the video. And that thumbnail may not be relevant to a user’s search query. If it isn’t, a user may not be able to assess which of the many search results are most relevant.

Because of these problems with video search results, this patent attempts to provide improved methods of finding and presenting video search results to will allow a user to easily assess the relevance of those videos.

Improved Video Search Results

This Video Search Results approach works to find and present video results that are responsive to a user keyword query. This system:

- Receives a keyword query from a searcher

- Selects a video having content that is relevant to the keyword query

- Choses a frame from the video that is representative of the video’s content using a video index which stores keyword association scores between frames of several videos and keywords associated with the video frames

- The selected frame is shown as a thumbnail for the video

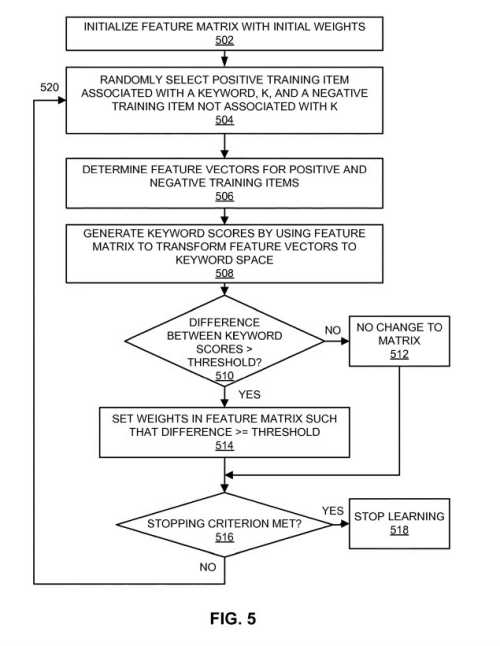

How this systen using a machine Learned model to Return Video Search Results

This system does this by:

- Creating a searchable video index with a machine-learned model of the relationships between features of video frames, and keywords descriptive of video content

- Receiving a labeled training dataset that includes a set of media items (e.g., images or audio clips) together with one or more keywords descriptive of the content of those media items

- Extracting features that characterize the content of the media items

- Learning correlations between particular features and the keywords descriptive of the content

- Creating a video index which maps frames of videos in a video database to keywords based on features of the videos and the machine-learned model

The patent tells us that the advantage of using the process from this patent is that this video hosting system finds and presents search results based on the actual content of the videos instead of relying solely on textual metadata found near videos. It enables a searcher to better assess the relevance of videos from search results.

This video search results patent can be found at:

Relevance-based image selection

Inventors: Gal Chechik and Samy Bengio

Assignee: Google LLC

US Patent: 10,614,124

Granted: April 7, 2020

Filed: April 15, 2015

Abstract

A system, computer-readable storage medium, and computer-implemented method presents video search results responsive to a user keyword query. The video hosting system uses a machine learning process to learn a feature-keyword model associating features of media content from a labeled training dataset with keywords descriptive of their content. The system uses the learned model to provide video search results relevant to a keyword query based on features found in the videos. Furthermore, the system determines and presents one or more thumbnail images representative of the video using the learned model

Video Search Results Takeaways

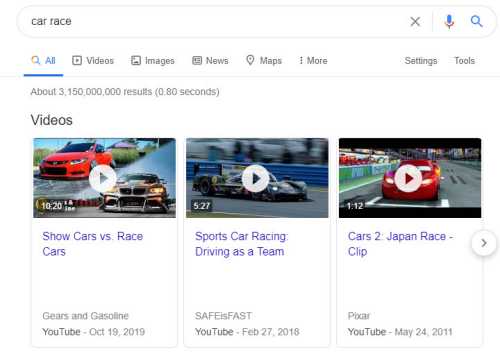

When Google uses this approach, it pays attention to all scenes in a video. Even a long video including a car race scene may not be described in the metadata that accompanies the video. The patent tells us that:

For example, if the user enters the search query “car race,” the video search engine can find and return a car racing scene from a movie, even though the scene may only be a short portion of the movie that is not described in the textual metadata.

The process described in this patent wouldn’t require someone to do anything special or different. It would just mean that Google could do a better job of returning videos that might contain content that a searcher might be looking for, like a car racing scene from a movie.

To do this a video search engine might select a thumbnail image or a set of thumbnail images to display with each retrieved search result.

That thumbnail image may be an image frame that is representative of the video’s audio-visual content and also responsive to a searcher’s query. It can assist a searcher in determining the relevance of the search result.

A video annotation engine can annotate frames or scenes of video from a video database with keywords relevant to the audio-visual content of the frames or scenes and stores these annotations to the video annotation index. That is what would get searched when a searcher would be looking for a result.

The patent provides much more detail on how content from a video could be indexed based upon keywords that could be used to annotate frames from the video.

This process can mean that more relevant videos can be returned for searcher’s queries based upon the actual content of those videos than just textual metadata that accompanies a video.

If you submit videos to sites such as YouTube, you can test Google search to see if it is returning those videos based upon more than just the metadata that accompanies those videos

Search News Straight To Your Inbox

*Required

Join thousands of marketers to get the best search news in under 5 minutes. Get resources, tips and more with The Splash newsletter: