Table of Contents

Answer Passages and Featured Snippets

There hasn’t been an answer to this question about featured snippets until now, with the granting of a patent that says that an answer passage might be selected based upon a score that shows that there is structured data (such as schema) and unstructured data (such as prose passages) to provide an answer.

Google was granted a patent last week, describing search engine query processing when it comes to question-answering.

The patent tells us about what makes question-answering featured snippets results different and unique:

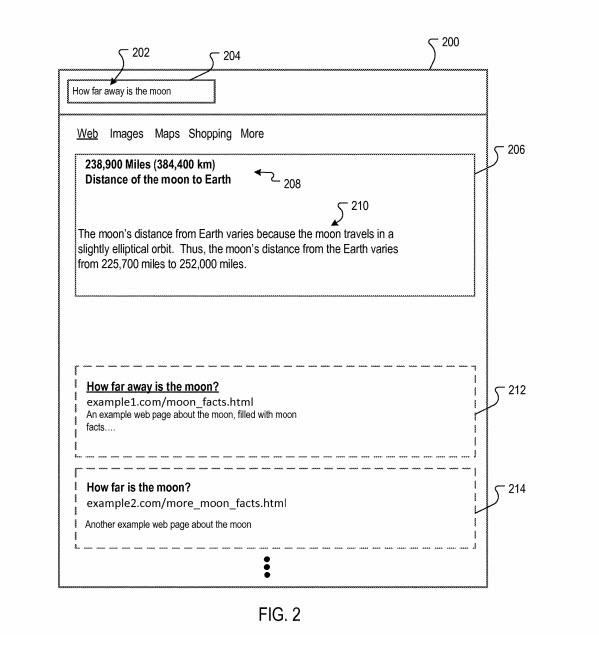

Users of search systems are often searching for an answer to a specific question, rather than a listing of resources. For example, users may want to know what the weather is in a particular location, a current quote for a stock, the capital of a state, etc. When queries that are in the form of a question are received, some search engines may perform specialized search operations in response to the question format of the query. For example, some search engines may provide information responsive to such queries in the form of an “answer,” such as information provided in the form of a “one box” to a question.

I’m reminded of Google dictionary results, which I wrote about back in 2006, in the post Looking at Google Definitions. That reference to a one-box type of answer also reminds me of Google’s one box patent, which tells us about the large amount of data that Google might look at when it decides to return a one box result. I wrote about an update to the one box patent in 2017 in the post at Google Updates Their One Box Patent.

Related Content:

What are Candidate Answer Passages?

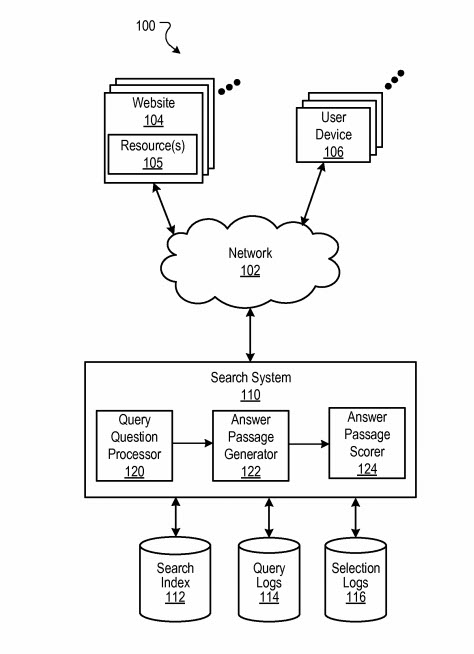

This new patent about question answering at Google introduces the concept of candidate answer passages, which they define for us at the start of this candidate answer passages patent:

Some question queries are better served by explanatory answers, which are also referred to as “long answers” or “answer passages.” For example, for the question query [why is the sky blue], an answer explaining Rayleigh scatter is helpful. Such answer passages can be selected from resources that include text, such as paragraphs, that are relevant to the question and the answer. Sections of the text are scored, and the section with the best score is selected as an answer.

Knowing something about how an answer passage might be scored by Google may improve your chances of creating an answer passage on your page that Google may use to answer something such as [why is the sky blue?]

How are answer passages scored by Google?

First, Google looks at a query received to see what type of response it appears to be looking for. Is it a question query that is looking for featured snippets and “data identifying resources determined to be responsive to the query?”

The data resources that an answer comes from may be scored based upon the following factors:

The resource contains several passages, with each of those being content that is eligible to be included as an answer.

The passages may be judged based upon “selection criterion” that may look at:

- Whether there is structured data (such as schema) and unstructured content (such as text on a web page) that responds to the query. as an answer.

- Is the resource separate and distinct from search results that might be included in addition to an answering passage?

Why Does Google Want Structured and Unstructured Content for their Answer Passages?

The patent refers to this requirement as an advantage of the process behind the patent.

By requiring both, Google tells us that the unstructured content allows the searcher to receives “prose-type explanations,” and the Structured Content enables factual information to be returned, which means that an answer can be a combination of prose and facts, which can be very relevant to what the searcher was trying to find.

Addressing Searcher’s Informational Needs with Answer Passages

The patent tells us that when they score candidate answer passages, they look at query dependent and query independent signals.

Query Dependent signals are ones that are based upon how relevant a passage might be for the terms used in the query to find a passage. So, a question asking about whether Rami Malek sung in the movie Bohemian Rhapsody would score higher based upon query dependent signals if it mentioned the actor, the movie, and was about him singing.

Query Independent signals are ones that look at other things than relevance to query terms, such as the number of links pointed to a page that passages are upon, or how fresh and timely that page maybe if the question was one that involved very timely news (such as the winning of the best drama movie in the Golden Globes for Bohemian Rhapsody.)

The patent says that this scoring based upon both query dependent and query independent signals tells us that:

the query dependent signals may be weighted based on the set of most relevant resources, which tends to surface answer passages that are more relevant than passage scored on a larger corpus of resources. This, in turn, reduces processing requirements and readily facilitates a scoring analysis at query time.

Earlier patents that talked about providing answers for queries that contained questions said that they were looking for answers from high authority sites, but didn’t provide as much detail. I wrote about one of those in the post, Direct Answers – Natural Language Search Results for Intent Queries. It’s difficult believing I wrote that five years ago. I’ve been waiting for something ever since that said that Google might be looking at structured data for answers to those questions.

This newly granted patent was originally filed in 2015. By telling us that having Structured data on our page increases chances of showing featured snippets, it provides another good reason to be including structured data on your site.

The patent is:

Candidate answer passages

Inventors: Steven D. Baker, Srinivasan Venkatachary, Robert Andrew Brennan, Per Bjornsson, Yi Liu, Nitin Gupta, Diego Federici, and Lingkun Chu

Assignee: Google LLC

US Patent: 10,180,964

Granted: January 15, 2019

Filed: August 12, 2015

Abstract

Methods, systems, and apparatus, including computer programs encoded on a computer storage medium, for generating candidate answer passages. In one aspect, a method includes receiving a query determined to be a question query data identifying resources determined to be responsive to the query; for each resource in a top-ranked subset of the resources: identifying a plurality of passage units in the resource; applying a set of passage unit selection criterion to the passage units, each passage unit selection criterion specifying a condition for inclusion of a passage unit in a candidate answer passage, wherein the first subset of passage unit selection criteria applies to structured content and the second subset of passage unit selection criteria applies to unstructured content; and generating, from passage units that satisfy the set of passage unit selection criterion, a set of candidate answer passages.

Added October 15, 2020 – I have written a few other posts about answer passages that are worth reading if you are interested in how Google finds questions on pages and answers to those, and scores answer passages to determine which ones to show as featured snippets. Here are those posts:

- September 23, 2020 – Featured Snippet Answer Scores Ranking Signals

- October 9, 2020 – Adjusting Featured Snippet Answers by Context

- October 14, 2020 – Weighted Answer Terms for Scoring Answer Passages

Search News Straight To Your Inbox

*Required

Join thousands of marketers to get the best search news in under 5 minutes. Get resources, tips and more with The Splash newsletter: