I like visiting small towns and exploring their history. I grew up near Princeton, New Jersey, and it had a lot of history to investigate. I lived in Newark, Delaware, and it had a rich history as well. I then moved to Warrenton, Virginia, and it was in the middle of the Civil War and had switched hands over 60 times during the war, with many monuments, and lots of stories. I’ve been digging into the history of San Diego recently, and there’s a lot to learn about here as well. It would be nice to be able to query Google about things that I am finding, like, “OK Google, what is this statue of a surfer near the beach in Cardiff, California?” Last time I visited Washington DC, I didn’t know whom some of the monuments I came across were dedicated to but would have liked to have found out.

Related Content:

Imagine that Google started answering questions about entities near you, such as “What time does this restaurant in front of me open?” or “What is the name of the monument across the street?”

That’s a capability that a Google patent granted at the end of February tells us that we might see at some point in time from the search engine.

The way this patented process works is that it might look at query patterns that could be associated with entities that are close to an approximate location of the device that you are searching upon. Those linguistic patterns may be from previous queries, and could be things like “What time does X open? or “What is the name of X?” Google could search through query log files and associated queries with specific locations, and find patterns associated with those to understand what entity your query from the same location might be about. Using query patterns and knowledge of entities that are nearby, could allow the search engine to rewrite your query to “explicitly reference the entity” that your query may have intended. So, “what is this entity in front of me,” could be rewritten by Google to “What is the Washington Monument?”

Benefits of Using This Process

The advantages listed in this patent of this process, including that a searcher does not need to know the actual name of the entity that is the subject of their query, which would work with a query such as [what is this monument], possibly very helpful if you don’t know how to properly pronounce and/or spell the name of the entity. This could be helpful when vacationing in a country where you may not speak the native language like described in this example from the patent:

For example, a user that does not speak German can be on vacation in Zurich, Switzerland, and can submit the query [opening hours], while standing near a restaurant called “Zeughauskeller,” which may be difficult to pronounce and/or spell for the user. As another example, implementations of the present disclosure enable users to more conveniently and naturally interact with a search system (e.g., submitting the query [show me lunch specials] instead of the query [Fino Ristorante & Bar lunch specials].

The patent application is:

Interpreting User Queries Based on Nearby Locations

Invented by: Nils Grimsmo and Behshad Behzadi

Pub. No.: WO/2016/028696

Published: 25.02.2016

Filed: 17.08.2015

Abstract:

Methods, systems, and apparatus, including computer programs encoded on a computer storage medium, for receiving a query provided from a user device, and determining that the query implicitly references some entity, and in response: obtaining an approximate location of the user device, obtaining a set of entities comprising one or more entities, each entity in the set of entities being associated with the approximate location, selecting an entity from the set of entities based on one or more entity query patterns associated with the entity, and providing a revised query based on the query and the entity, the revised query explicitly referencing the entity.

Take Aways

The patent filing provides more in-depth information about the technology behind this process. One aspect of that which I find interesting is that the patent includes a reference to the Machine ID numbers that are found on the pages and URLs of Freebase entities. These machine ID numbers are a way of Google tracking and indexing entities across the Web, like the one mentioned in the following quote from the patent that mentions Alcatraz (look for the MID number – mid: /m/0h594 – on that page.)

In some implementations, a plurality of entities can be provided in one or more databases. For example, a plurality of entities can be provided in a table that can provide data associated with each entity. Example data can include a name of the entity, a location of the entity, one or more types assigned to the entity, one or more ratings associated with the entity, a set of entity query patterns associated with the entity, and any other appropriate information that can be provided for the entity. In some examples, an entity can be associated with a unique identifier within the one or more databases. For example, the entity Alcatraz Island can be assigned the identifier /m/0h594.

The patent application also describes how query patterns could be identified from queries found in a query log and used to help rewrite searcher’s queries. We are pointed to example query patterns such as [ratings], [restaurant ratings], and [theater ratings]. So, for example, you could find a place named “Awesome pizza” and ask Google, “any ratings for this pizza place?” It might then rewrite that query to be [ratings awesome pizza].

Example query patterns for a hotel could include things such as [how many suites *], [how many conference rooms *], and [reservation *].

I’m excited by this patent and hope we see a search enabled like this at Google that allows us to search based upon the entities that are located near us.

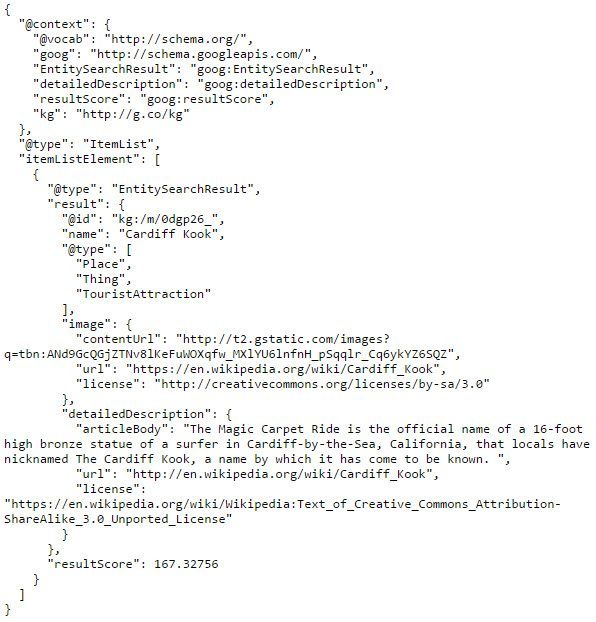

Added 2016/03/02 – I had to try this out, to see if it was something that Google turned on yet. A few blocks towards the beach from me is a statue of a surfer, known locally as “The Cardiff Kook,” though that’s not the real name of the monument. I typed into Chrome [what is this monument], and I didn’t get the real name, or even this local colloquialism. I looked the statute up using Google’s Knowledge Graph Search API, and that gave me its real name. I was hoping that this might work, but I needed to test and see. I’ll try it again to see if Google starts answering queries like this. I like to believe that it will start at some point.

Google KG Search API for the Cardiff Kook

Added 6/1/2016 The Google Blog today announced a feature much like what is described in this patent, in a post titled,

Now on Tap update: Text Select and Image Search , where they tell us:

And for certain images or objects, you can also search via your camera app in real-time. If you’re standing in front of the Bay Bridge, you can hold up your phone, open your camera app, touch and hold the home button, and get a helpful card with deep links to relevant apps. This works for more than just famous structures like the Bay Bridge, you can even point your camera at a movie poster or magazine and get additional info about what you’re looking at.

I think I need to go visit the Cardiff Kook again, and take a photo of it, and then hold down on the home button and see if Google serves me a card for it.

Search News Straight To Your Inbox

*Required

Join thousands of marketers to get the best search news in under 5 minutes. Get resources, tips and more with The Splash newsletter: